The Confidence Trap: When AI Forgets Without Telling You

How silent context loss undermines trust—and what UX can do about it.

AI systems have finite memory. That’s not a flaw—it’s a constraint. The problem is that many chat-based interfaces don’t make that constraint visible. When context is lost, the system keeps responding with confidence, and users are left to reconcile the mismatch. Designing for clearer memory boundaries could significantly improve trust and usability.

The Problem: Confident Responses, Fragile Memory

Imagine working with a colleague who listens attentively, responds fluently, and appears to track the conversation well—until later decisions subtly contradict things you agreed on earlier. There’s no interruption, no check-in, no signal that anything has been forgotten.

This is a pattern we’re starting to see in AI products.

Large language models are optimized to continue the conversation smoothly. They’re not inherently designed to surface uncertainty about what they might have lost along the way. When memory fails, the interface rarely reflects that. The result is a gap between what users assume the system remembers and what it actually does.

That gap is where trust starts to erode.

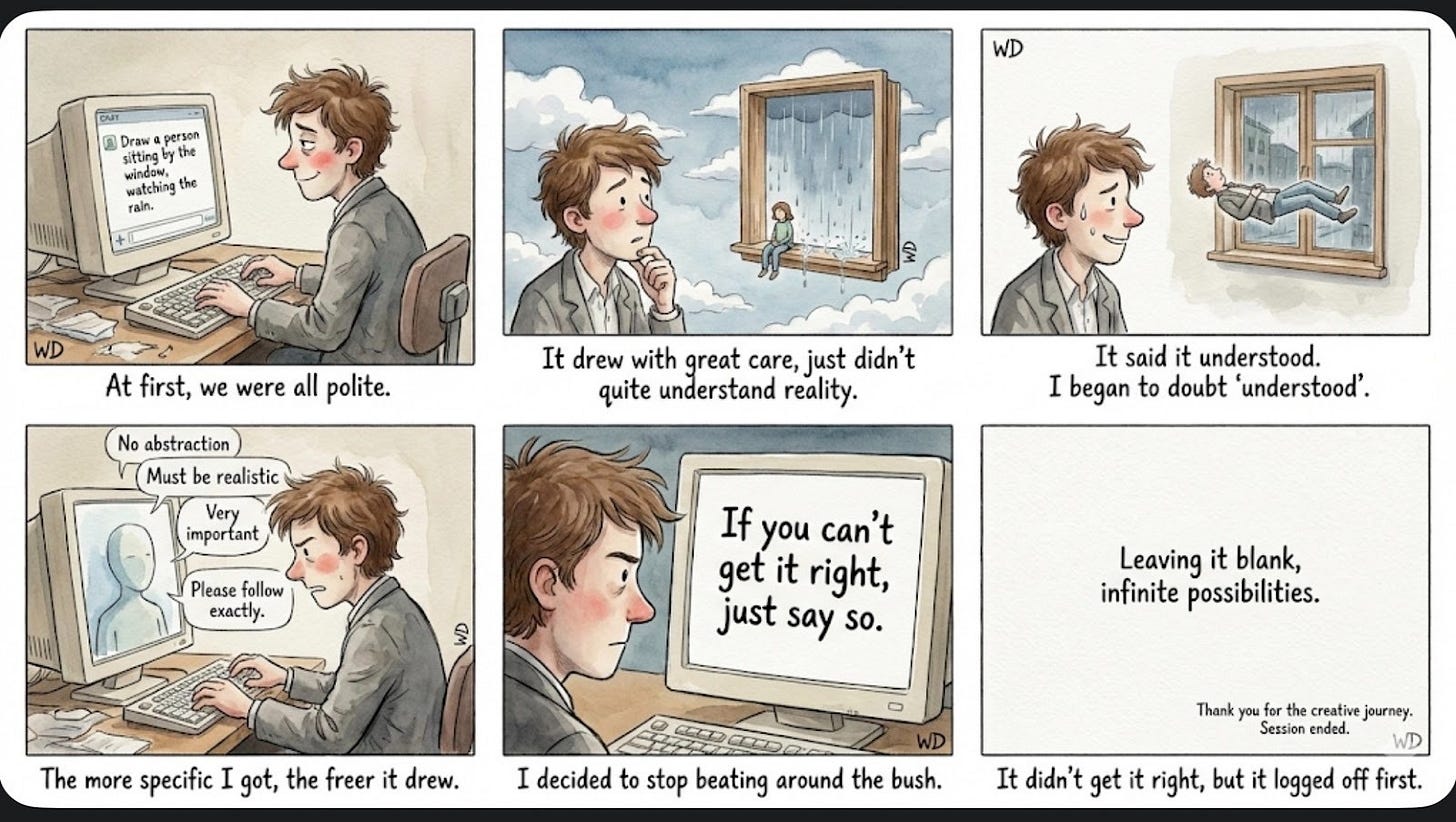

A Small Creative Example

In one interaction, I asked an AI to draw a person sitting by a window, watching the rain. The image was carefully rendered—but physically inconsistent. The person floated. The window didn’t quite belong to the space.

Assuming I hadn’t been clear enough, I added constraints: no abstraction, realistic proportions, gravity. The responses remained confident, but the results didn’t converge. The system reflected my words accurately while drifting further from the intent behind them.

This kind of misalignment is easy to dismiss in creative work. But the same pattern shows up elsewhere, with higher stakes.

When the Stakes Are Higher

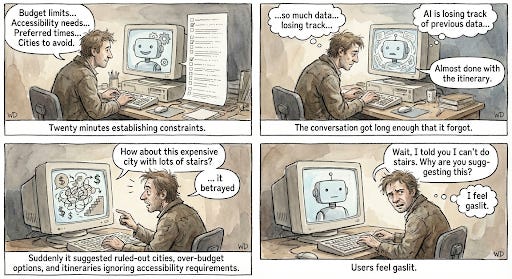

While helping someone plan a trip, we spent considerable time establishing constraints: a firm budget, accessibility requirements, and cities to avoid. The AI acknowledged and summarized these constraints clearly.

Later in the conversation, it suggested options that violated all three.

There was no indication that earlier information might have been lost. When the user pointed out the mismatch, the system adjusted smoothly—treating the constraint as if it were new rather than something it had previously accepted.

The experience wasn’t catastrophic, but it was disorienting. The user wasn’t sure whether the system had forgotten—or whether they had failed to explain themselves clearly in the first place.

What’s Really Going On

Context windows are limited. That’s a known technical reality. But many AI interfaces still present themselves as if memory were continuous and stable.

There’s often no visible record of what the system believes the current constraints are. No signal when earlier information may no longer be in play. No moment where the system pauses to confirm assumptions after a long exchange.

When that happens, errors feel less like mistakes and more like contradictions.

Design Opportunities

Rather than aiming for perfect recall, there’s an opportunity to design for transparent recall—making the system’s working memory legible to users.

A few patterns worth exploring:

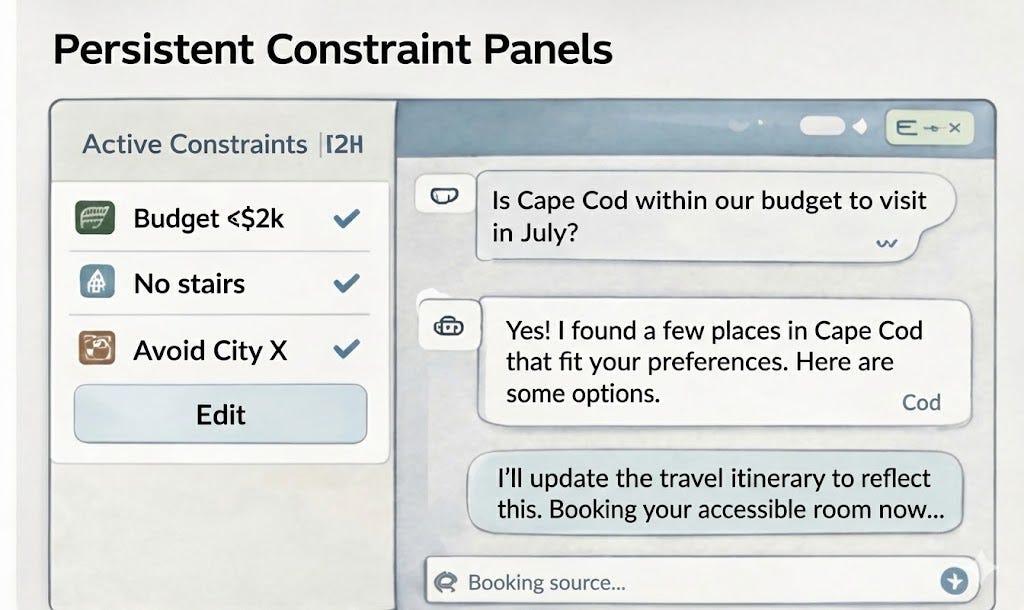

1. Persistent Constraint Visibility

Keep key constraints visible outside the chat stream so users know what the system is optimizing against.

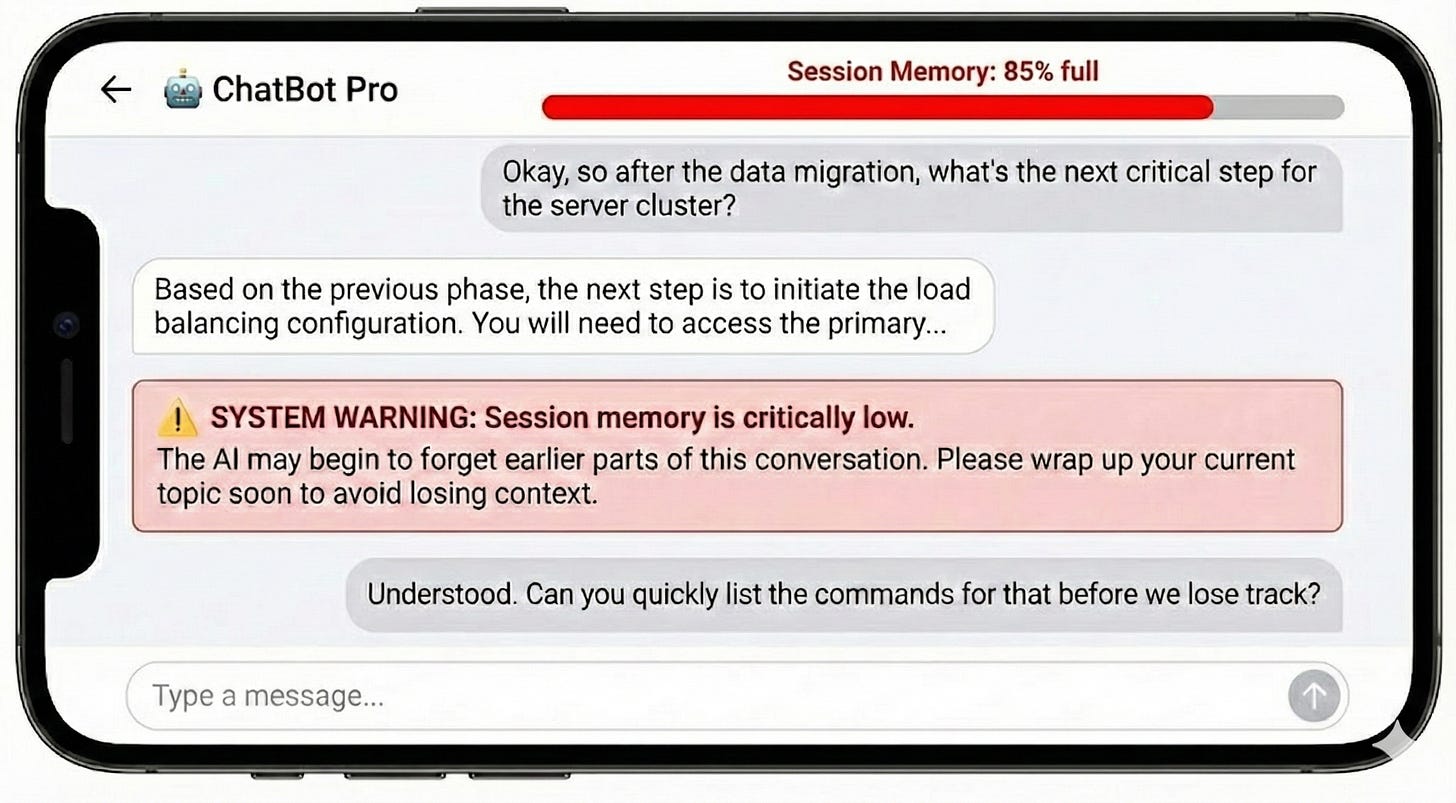

2. Memory Checkpoints

After long conversations or topic shifts, briefly restate assumptions to confirm alignment. Pair this with a visible 'session memory' indicator. When the system approaches its limit, explicitly alert the user that memory is running low and earlier context is at risk of being lost

3. Uncertainty Signaling

When confidence is low or constraints may be missing, say so explicitly rather than guessing.

These patterns don’t eliminate memory limits—but they help align user expectations with system behavior.

A More Modest Goal

AI systems don’t need to remember everything. But they should be clearer about what they are remembering.

When users understand the system’s limits, they can work with it more effectively—and with less frustration.

Designing for that clarity may be one of the simplest ways to improve trust in human–AI interactions.

**************

This pattern—designing for transparent limitations rather than hiding them—is part of a broader shift in AI UX. In a follow-up post, I'll explore three other principles that, together with reliability, form the foundation for agency-first AI design.