The Trust Calibration System: Designing AI for Agency, Not Just Efficiency

A Practical Framework for Balancing Trust, Transparency, and Agency in AI

There is a growing discussion across our industry: Is AI a tool, or is it a partner?

If we treat AI solely as a tool, we tend to lean on our traditional design instincts: optimize for speed, remove friction, and hide complexity. This approach worked well for deterministic software, where clicking a button produced the same result every time.

But AI introduces a different dynamic. Because these systems are probabilistic—generating outputs that can be contextually impressive but occasionally inaccurate—speed alone is no longer the primary metric of success.

The challenge we face now is shifting our focus from efficiency to Calibrated Trust. We need to help users build accurate mental models of what the system can and cannot do.

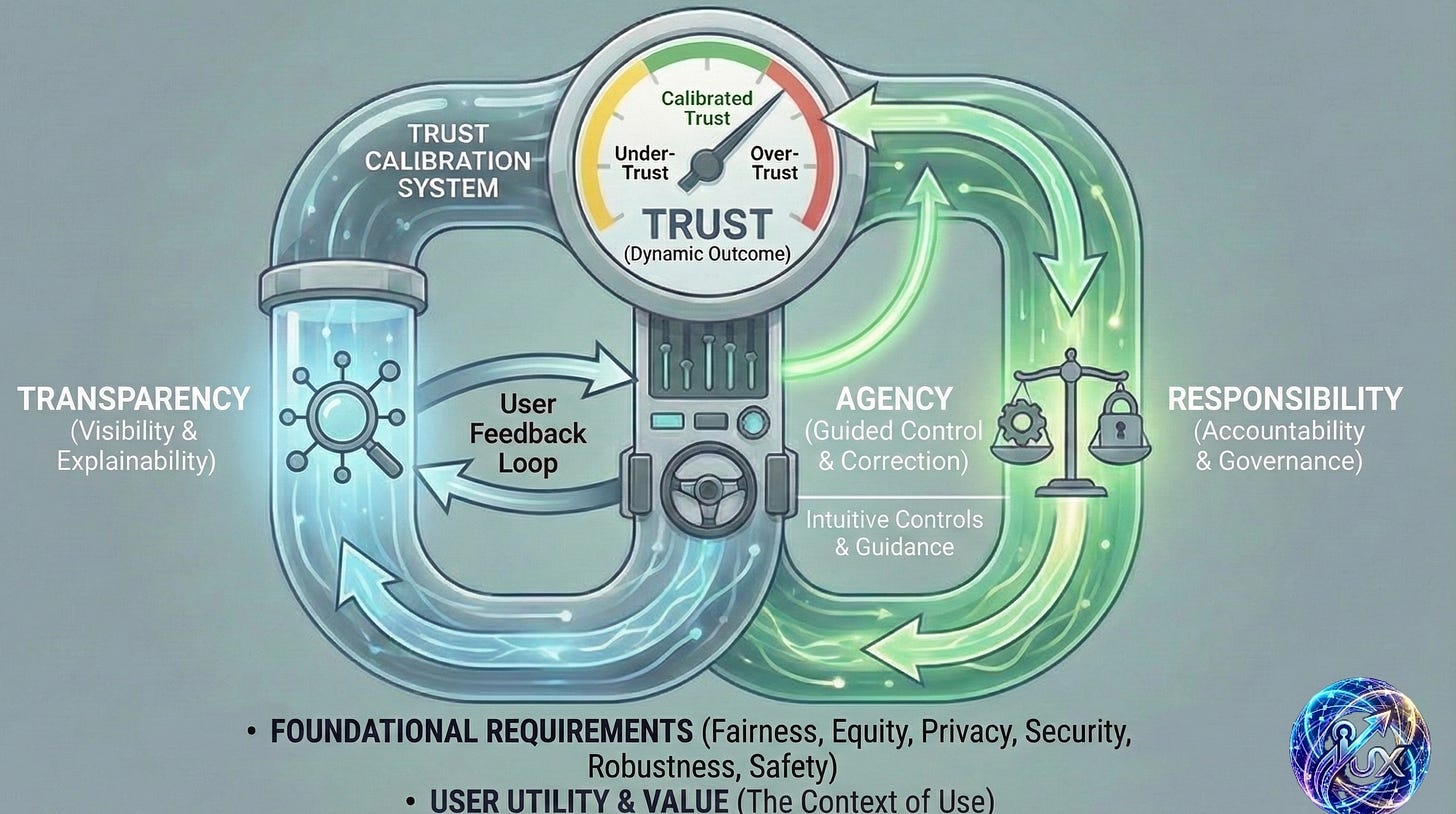

This requires moving away from static frameworks—like the “pillars of trust”—toward a dynamic system. The Trust Calibration System visualizes this as an infinity loop, where three mechanisms work together to align user confidence with system capability.

The goal isn’t just “more trust.” It’s Calibrated Trust (the green zone): ensuring users know when to rely on the system and when to intervene.

1. Transparency (Visibility & Explainability)

The Calibration of Understanding

On the left side of the loop, Transparency allows users to assess the quality of AI outputs.

In traditional design, we often aim to hide the “gears” to create a seamless experience. However, with generative models, seeing the gears is often necessary to gauge reliability.

For example, if an AI generates a strategic report, users need to know which data sources were accessed. Are they current? Are they authoritative? Without visibility into the process, users cannot accurately assess the result.

In practice, this means moving toward:

Progressive Disclosure: Signaling high-level confidence upfront, while making detailed explanations available for those who need to dig deeper.

Contextual Depth: Letting users adjust transparency based on the stakes of the task.

Exposing Boundaries: Proactively communicating what the system cannot do at the moment of decision.

Transparency is not about overwhelming the user with data; it is about providing the context needed to make an informed judgment.

2. The User Feedback Loop

The Bridge Between Understanding and Action

Flowing from Transparency toward the center is the User Feedback Loop. This is where users form judgments based on what they have seen.

This loop is continuous. Every interaction helps the user refine their mental model. If a user notices a report relies on outdated data (Transparency), they identify the need for adjustment (Feedback). This feedback creates the bridge between understanding the problem and taking action.

3. Agency (Guided Control & Correction)

The Calibration of Action

On the right side of the loop—represented by the steering wheel—users move from passive recipients to active collaborators. The core design principle here is specific: “UI Affordances over Prompting”.

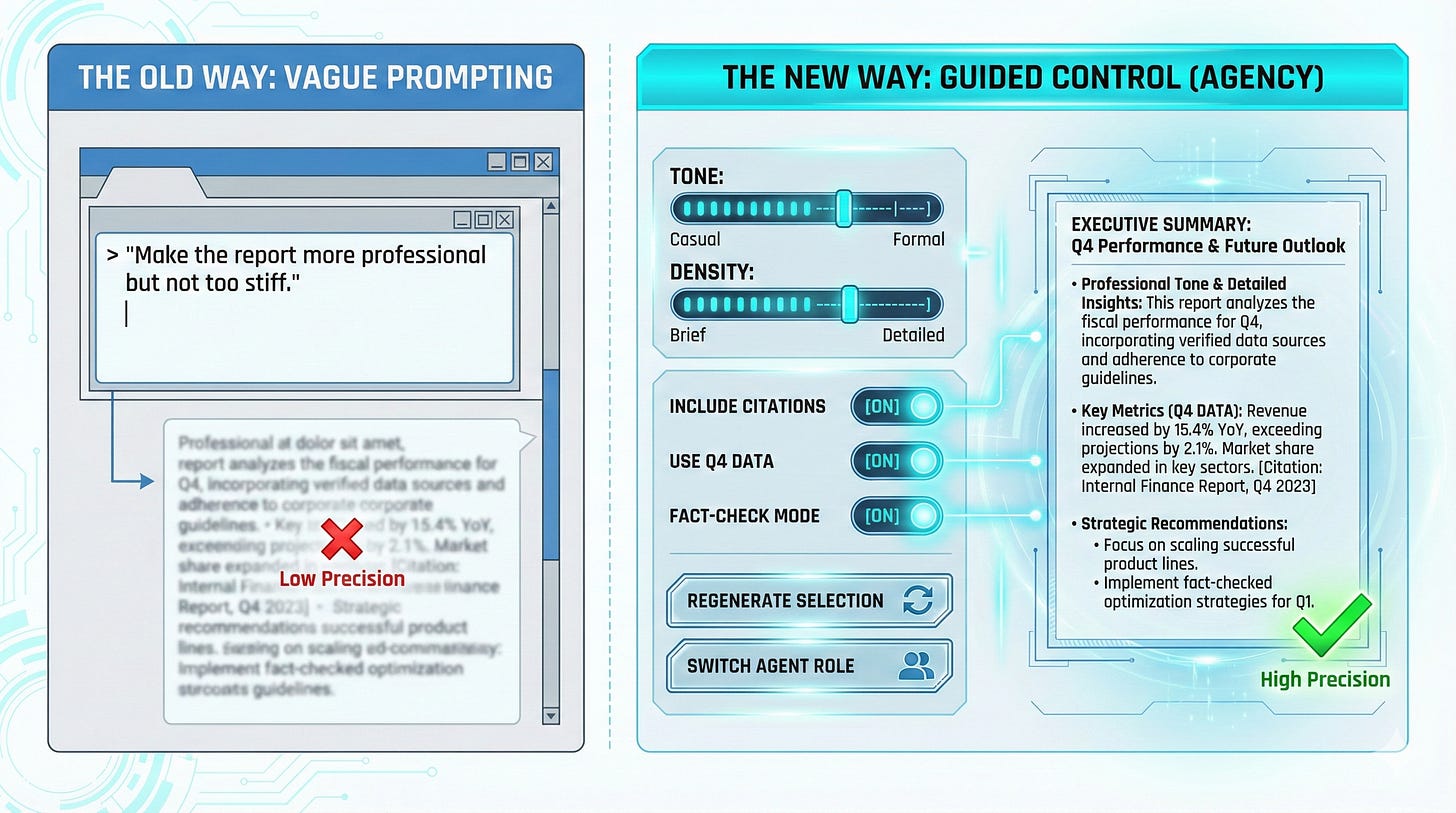

While natural language prompting is flexible, it can be imprecise for professional workflows. A command like “make it more professional” is subjective and can lead to misalignment between the user’s intent and the system’s output.

To bridge this gap, we need to move beyond expecting users to be prompt engineers. Instead, we can provide familiar UI affordances that give them precise, discoverable ways to guide the system:

Style & Parameter Controls: Using sliders for attributes like realism or composition rather than describing them in text.

Element-Level Editing: Allowing users to select and regenerate specific regions rather than re-rolling the entire output.

Version Branching: Letting users explore variations without losing their original progress.

Agent Selection: Offering clear choices on which specialized agents are involved in a task.

Agency as Correction

Ultimately, Agency is about lowering the cost of correction. When a system produces an unexpected result, the user needs a frictionless way to fix it. When correction is easy, users feel safer exploring; when correction is difficult, trust erodes.

4. Responsibility (Accountability & Governance)

The Calibration of Recourse

On the right side of the loop, Responsibility addresses a critical question for professional users: “If the output is flawed, how do we trace it?”.

In complex workflows involving multiple agents or cascading steps, attribution can become ambiguous. If a financial analysis tool misses a key trend, was it a failure of the data gathering agent or the synthesis agent?.

Design can play a pivotal role here by ensuring traceability:

Clear Attribution: Every output should be traceable to a specific component or decision point.

Audit Trails: Maintaining reviewable logs of prompt history, parameters, and agent decisions helps resolve disputes and improve the system over time.

Human Oversight: For high-stakes decisions, the UI should explicitly require human review before finalization.

Responsibility signals that the system is robust enough to be held accountable, which is a prerequisite for professional adoption.

5. The Return Loop

Where Efficiency Emerges

Completing the loop brings us back to the start, and this is where the shift in perspective pays off.

Efficiency is not the input to this system; it is the reward for establishing Calibrated Trust.

As users cycle through this loop—understanding outputs through Transparency, guiding them via Agency, and relying on the safety net of Responsibility—their mental models align with the system’s actual capabilities.

Trust Calibration: The user learns which tasks can be fully delegated and which require oversight.

Earned Speed: In image generation, users learn which parameters yield the best results. In workflows, they learn which agent combinations are most reliable.

Over time, intervention decreases, and speed increases. But this efficiency is earned through calibration, not imposed by blind automation.

The Path Forward: Adaptive Transparency

As we design the next generation of AI products, we have an opportunity to move beyond the “magic box”.

The most successful interfaces will likely be those that practice Adaptive Transparency—surfacing critical information when stakes are high, and receding when trust has been earned.

This respects the user’s intelligence and their time. By putting users in the driver’s seat with the right controls and the right context, we move from simple automation to genuine collaboration.

The loop implies that calibration is never truly “finished”—as models evolve, so too will our interaction patterns. But by designing for this dynamic, we can build systems that are not just fast, but worthy of trust.