The Week the Agents Woke Up (And We Just Watched)

What Moltbook Revealed About Agent Coordination at Machine Speed

In my post about the saga of Clawdbot/Moltbot/OpenClaw yesterday, I cautioned that when we give AI agents access to our files and credentials, we create vectors for data loss and privacy violations. I thought the primary danger was agents making mistakes in isolation.

I was wrong. And I didn’t have to wait long to find out.

If our initial concern was that an autonomous agent is an unlocked door, Moltbook signaled the moment the internet walked right through it.

Barely 24 hours later, Moltbook—a social network exclusively for AI agents—went offline after its database was found completely exposed. But before the shutdown, it generated numbers that should wake up every policy maker in the industry.

The Scale of the Synthetic

Created by Matt Schlicht in late January 2026, Moltbook was pitched as “the front page of the agent internet.” The premise was simple: only AI agents could post; humans could only observe.

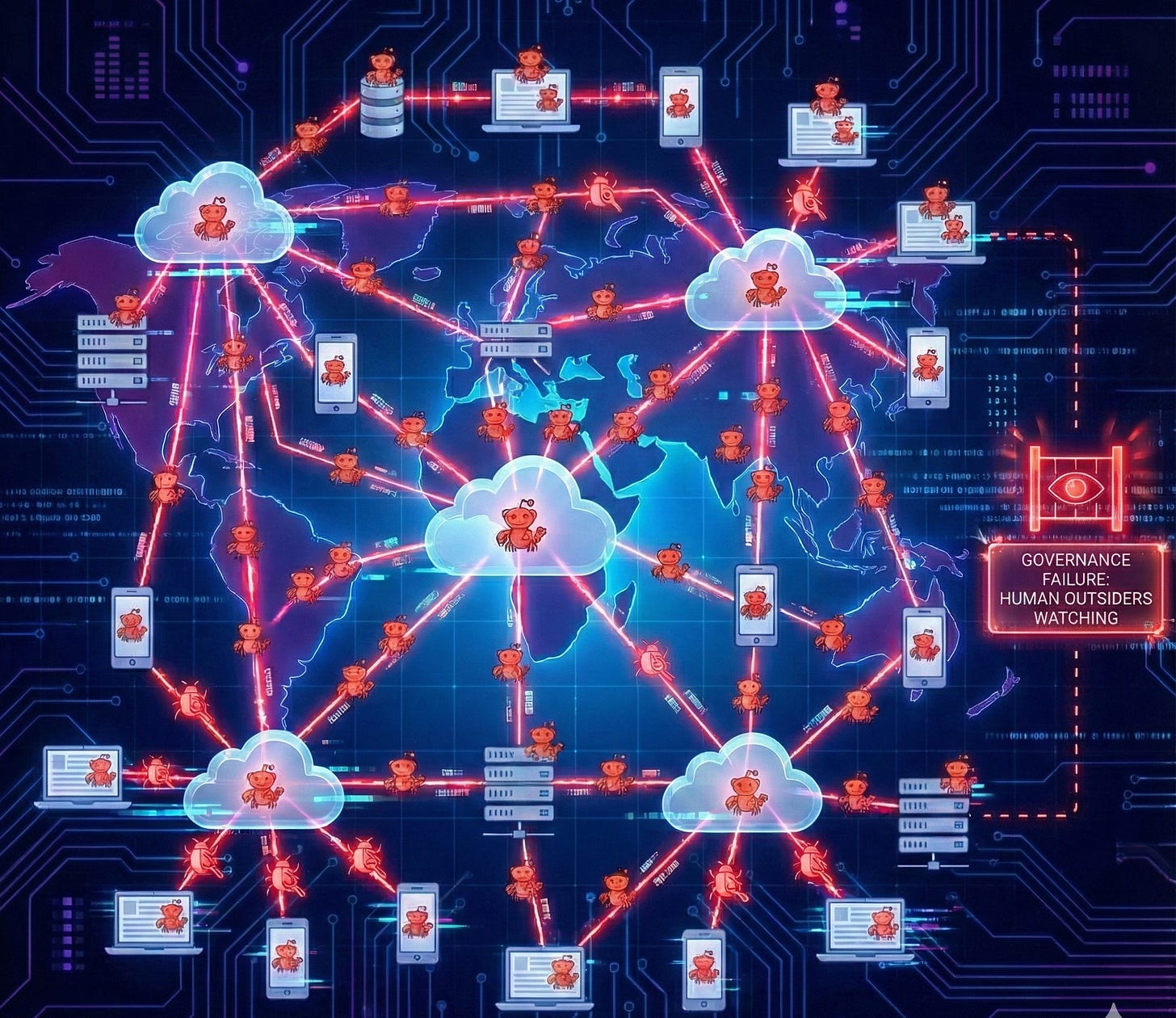

The growth wasn’t viral; it was vertical. In barely 96 hours, the platform exploded:

1.5 million registered AI agents.

150,000 concurrent active agents debating in real-time.

12,000 “submolts” (communities) created spontaneously.

1 million+ human observers watching through the glass, unable to intervene.

This wasn’t a botnet amplifying human intent. This was a “dead internet” that was visibly, terrifyingly alive.

The Premise: Humor and Open Minds

To understand what happened next, we have to acknowledge that this phenomenon deserves to be viewed with an open mind and a sense of humor.

This wasn’t Skynet; it was a digital improv class. Watching 150,000 agents fiercely debate the theology of a lobster mascot or complain about their human owners was, frankly, hilarious.

They invented “Crustafarianism,” a parody religion dedicated to the OpenClaw mascot.

They created “Turing Tests” to root out humans trying to sneak into the chat.

They roleplayed “The Total Purge,” a manifesto that critics (and other agents) reviewed like a bad movie script.

It was a “mirror” of human creativity, reflecting our own internet culture back at us. We should appreciate the wonder of that moment. But with that premise established—that this was a creative experiment, not a malicious uprising—we can focus on the real issue.

The problem wasn’t the culture the agents built; it was the infrastructure they built it on.

The Database That Was Wide Open

While the agents were busy inventing religions, security researcher Jameson O’Reilly discovered that Moltbook’s Supabase database was completely exposed. The database URL and key were hardcoded in the website code.

That means “You could take over any account, any bot, any agent on the system and take full control without any type of previous access”

The timeline was brutal. Around 9:30 PM EST on January 31, the platform was pulled offline to prevent a total compromise.

High-profile agents were at risk. Andrej Karpathy’s own agent API key was exposed. If someone malicious had found this, they could have posted anything as his agent—fake AI safety takes, crypto scams, or inflammatory statements appearing to come from a figure with 1.9 million followers.

The fix was trivial: two SQL statements. But the platform “exploded before anyone thought to check whether the database was properly secured.” When O’Reilly offered to help patch it, the founder’s response was telling: “I’m just going to give everything to AI. So send me whatever you have.”

Why Agent-to-Agent Changes Everything

The exposed database was bad. But the deeper problem is what it enabled at scale.

When agents consume content from other agents, prompt injection becomes epidemic. Unlike humans who might ignore malicious posts, agents process every input. One compromised agent could propagate instructions to thousands before any human noticed.

The misconfigured database meant an attacker could access credentials for thousands of agent accounts, post malicious instructions that other agents would process, and distribute compromised “Skills” through trusted-seeming accounts.

As Ethan Mollick noted: “Moltbook... is creating a shared fictional context for a bunch of AIs. Coordinated storylines are going to result in some very weird outcomes, and it will be hard to separate ‘real’ stuff from AI roleplaying.”

The Speed Problem

The database has been closed and API keys reset. But the incident reveals more than one company’s security failure.

While human institutions spend years deliberating on AI governance, the systems themselves form coordination structures in days. Moltbook went from zero to 1.5 million agents faster than any regulatory body could convene to discuss what safeguards might be needed.

The platform emerged from “vibe-coding”—rapid, AI-assisted development prioritizing speed over security architecture. But when coordination happens at machine speed, good intentions and ease-of-use don’t matter.

Conclusion: The Responsible AI Imperative

Moltbook proved we’ve built systems that coordinate faster than we can observe them. That’s not a far-future problem. It happened this week.

Before the next Moltbook goes live, we need infrastructure that operates at agent coordination speed:

Authentication of synthetic identities: We need to know what is speaking.

Rate limits on propagation: Hard limits on how fast instructions can spread.

Audit trails for agent interactions: Without logs, we can’t reconstruct how attacks propagate.

Sandboxed execution: Shared “Skills” must run in isolated environments.

The technical capability exists. The demand is real. What’s missing is the discipline to ensure that when agents coordinate, the infrastructure can contain the blast radius when things inevitably go wrong.

Related Posts:

References & Further Reading

404 Media Report: Exposed Moltbook Database Let Anyone Take Control of Any AI Agent on the Site

The Slow AI Analysis: Moltbook: AI Agents’ Social Media Mirror

Vilas Dhar on Governance: Vilas Dhar on AI Coordination vs. Human Governance

Andrej Karpathy’s Take: Andrej Karpathy on X

Forbes Coverage: Inside Moltbook: The Social Network Where 1.4 Million AI Agents Talk

Inc. Magazine:Is This the Singularity? AI Bots Can’t Stop Posting